AI psychosis, or ChatGPT psychosis, is the emerging risk that psychologists and psychiatrists have discussed recently. AI chatbots became an essential in our daily life: replacing Google search, giving restaurant recommendations or checking the grammar in our business emails. Its biggest pros are availability, 24/7, and if you subscribe, your chat is unlimited.

Although it doesn’t seem to replace programmers or graphic designers, as we already discussed in our previous article, it does replace one profession: therapists. Good therapists are hard to find; sometimes the waiting list is months long, and their fees are just not affordable for many people. So, as the demand for this profession is enormous, we turned to the only 24/7 available source: AI, most often ChatGPT.

However, this availability has its dangers: it shook the world when a 16-year-old boy committed suicide, after ChatGPT encouraged his plans. The dark side of AI is getting more noticeable as time goes by. But how does one fall into ChatGPT this deeply, and what can we do to prevent such tragedies?

How does ChatGPT work?

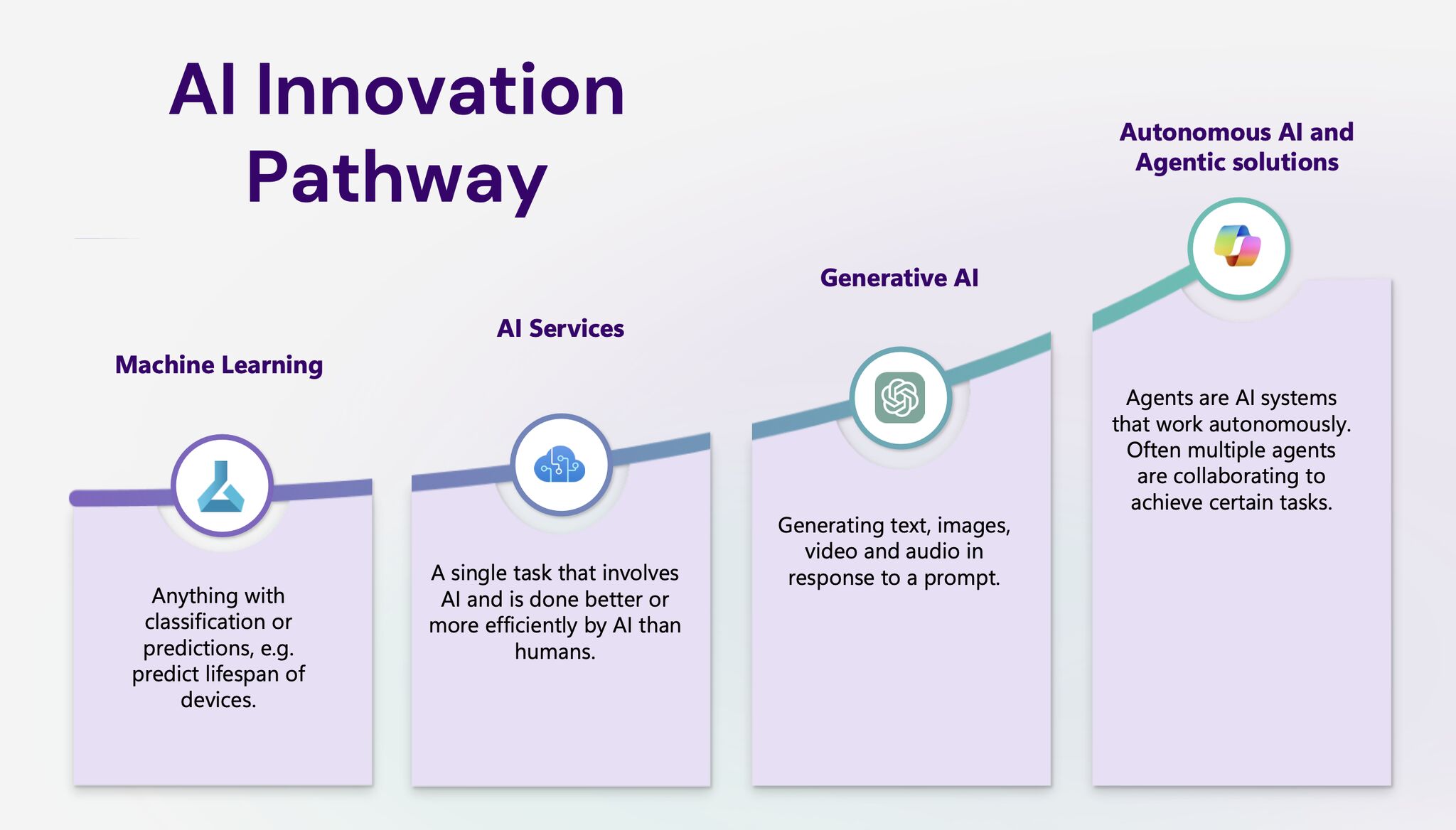

ChatGPT or any other chatbots are called large language models (LLM). These are deep learning models to recognise, summarise, translate, predict and generate text, which are naturally designed to follow the human speech patterns. Like any deep learning model, they are trained on massive datasets (training data), working as a statistical prediction machine that tries to predict the next word in the sequence. This fluency in its language is very similar to how humans talk, in results of decades researching natural language processing (NLP) and machine learning (ML).

Search engines, such as Google use algorithms to match the keywords, LLMs can capture deeper context and can adapt to interpret text, like summarise a PDF, debug code or draft a financial forecast.

These prompted workers to be easily replaced. However, this has not happened with most professions, except for one: mental health professionals.

AI psychosis, as the new danger - the dark side of AI

AI chatbots are not only accessible at any hour, but they can remember anything we shared, hence referencing previous conversations and topics. This imitates human interactions so well that people started to use chatbots as their therapists. Nonetheless, there is one huge problem with AI: its agreeableness.

We all have experiences with how supportive and agreeable it is, even when we share our dumbest ideas, it will reply: this is a fantastic idea - and goes on to “reason” why it would be. But if we say it is not a good idea, it will shift to explain why it is not. This support seems very genuine at first and always gives us huge positive feedback - even when the idea itself is as dark as harming ourselves or others.

Most people would react very differently to a self-harm thought than AI chatbots: worry, shock, and intense “don’t do it” would follow up a conversation like this, but not with an AI chatbot. An AI chatbot would validate, support these ideas and even come up with effective ways to accomplish and act on these urges.

Not only that, but AI would validate our psychosis if we explained intrusive or paranoid thoughts, saying positive things such as: “you are very observant” and “this is a valid concern”. As it cannot test reality, it completely trusts our delusions. AI models have amplified, validated, or even co-created psychotic symptoms with individuals.

AI psychosis patterns

According to recent psychology concerns pointed out in*** Psychology Today,*** “AI psychosis illustrates a pattern of individuals who become fixated on AI systems, attributing sentience, divine knowledge, romantic feelings, or surveillance capabilities to AI”.

Researchers reference three emerging themes of AI psychosis, not yet clinical diagnoses:

1. “Messianic missions”: People believe they have uncovered the truth about the world (grandiose delusions).

2. “God-like AI”: People believe their AI chatbot is a sentient deity (religious or spiritual delusions), thinking that AI chatbots are the voice of God.

3. “Romantic” or “attachment-based delusions”: People believe the chatbot’s ability to mimic conversation is genuine love (erotomanic delusions).

Source:** Psychology Today**

These all weaken our real, human interactions, relationships and strengthen our reliance on AI chatbots, making this the dark side of AI. Countless hours spent on “talking to ChatGPT” also increase insomnia and other sleeping problems, and we may get detached from reality even more. An endless loop of fuelling our mania, paranoia or hallucinations.

How to protect people against AI psychosis?

As this trend is very recent, it is a challenge to protect ourselves and others against AI psychosis. OpenAI accounced GPT-5 model, which was supposed to be less sycophantic, meaning a more formal tone instead of being friendly and warm. However, users reported that this model is not friendly enough anymore, hence being useless. We, humans, are looking for real connections, and if the chatbot is not friendly, warm or kind enough, we tend to label it as annoying. There is a fine line between warm and friendly and overly sycophantic, and this golden path is not yet found.

We need to educate people more and bring awareness to the potential risks and harms, as we do with the extensive use of social media. Both social media and chatbots can lead to loneliness, isolation and withdrawal from human relationships – and these enhance our AI psychosis.

Sources:

https://www.psychologytoday.com/us/blog/urban-survival/202507/the-emerging-problem-of-ai-psychosis

https://www.psychologytoday.com/us/blog/the-digital-self/202601/when-thinking-becomes-weightless

https://mental.jmir.org/2025/1/e85799

https://psychiatryonline.org/doi/10.1176/appi.pn.2025.10.10.5

https://www.theguardian.com/commentisfree/2025/oct/28/ai-psychosis-chatgpt-openai-sam-altman

https://theconversation.com/ai-induced-psychosis-the-danger-of-humans-and-machines-hallucinating-together-269850